Language is the most intuitive way for us to express how we feel and what we want. However, despite recent advancements in artificial intelligence, it is still very hard to control a robot using natural language instructions. Free-form commands such as “Robot, please go a little slower when you pass close to my TV” or “Stay far away from the swimming pool!” are hard to parse into actionable robot behaviors, and most human-robot interfaces today still rely on complex strategies such directly programming cost functions which define the desired behavior.

With our latest work, we attempt to change this reality through the introduction of “LaTTe: Language Trajectory Transformer”(opens in new tab). LaTTe is a deep machine learning model that lets us send language commands to robots in an intuitive way with ease. When given an input sentence by the user, the model fuses it with camera images of objects that the robot observes in its surroundings, and outputs the desired robot behavior.

As an example, think of a user trying to control a robot barista that’s moving a wine bottle. Our method allows a non-technical user to control the robot’s behavior only using words, in a natural and simple interface. We will explain how we can achieve this in detail through this post.

Unlocking the potential of language for robotics

The field of robotics traditionally uses task-specific programming modules, which need to be re-designed by an expert even if there are minor changes in robot hardware, environment, or operational objectives. This inflexible approach is ripe for innovation with the latest advances in machine learning, which emphasizes reusable modules that generalize well over large domains.

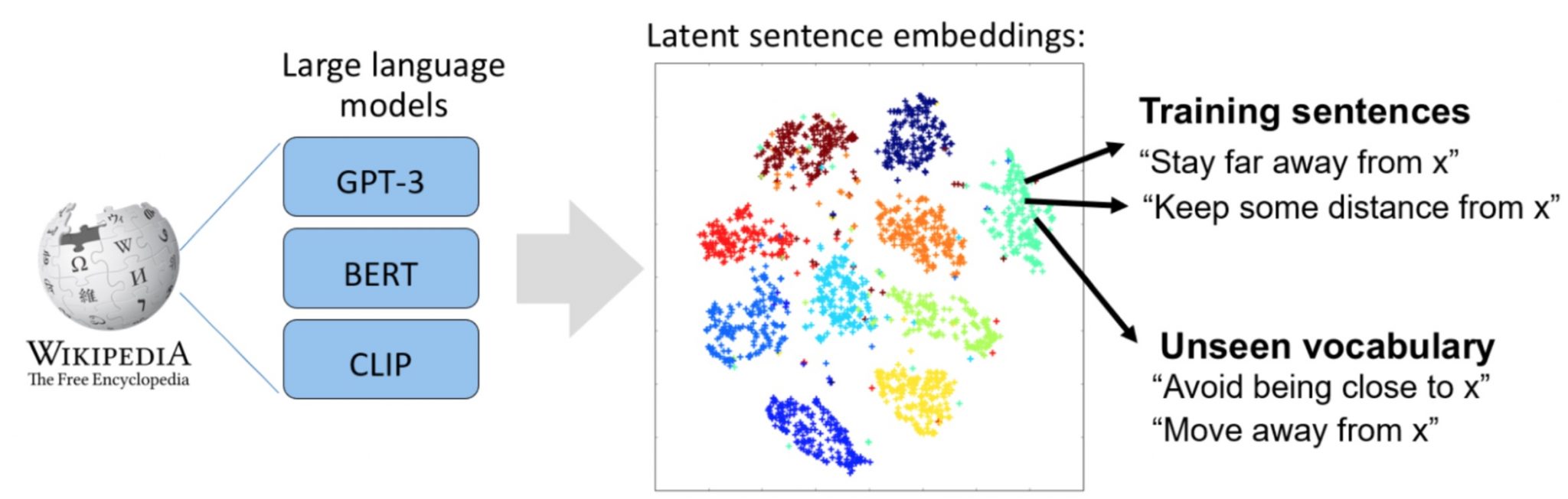

Given the intuitive and effective nature of language for general communication, it would be simpler if one could just tell the robot how they want it to behave as opposed to having to reprogram the entire stack every time a change is needed. While large language models such as BERT, GPT-3 and Megatron-Turing have radically improved the quality of machine-generated text and our ability to solve to natural language processing tasks, and models like CLIP extend our reach capabilities towards multi-modal domains with vision and language, we still see few examples of language being applied in robotics.

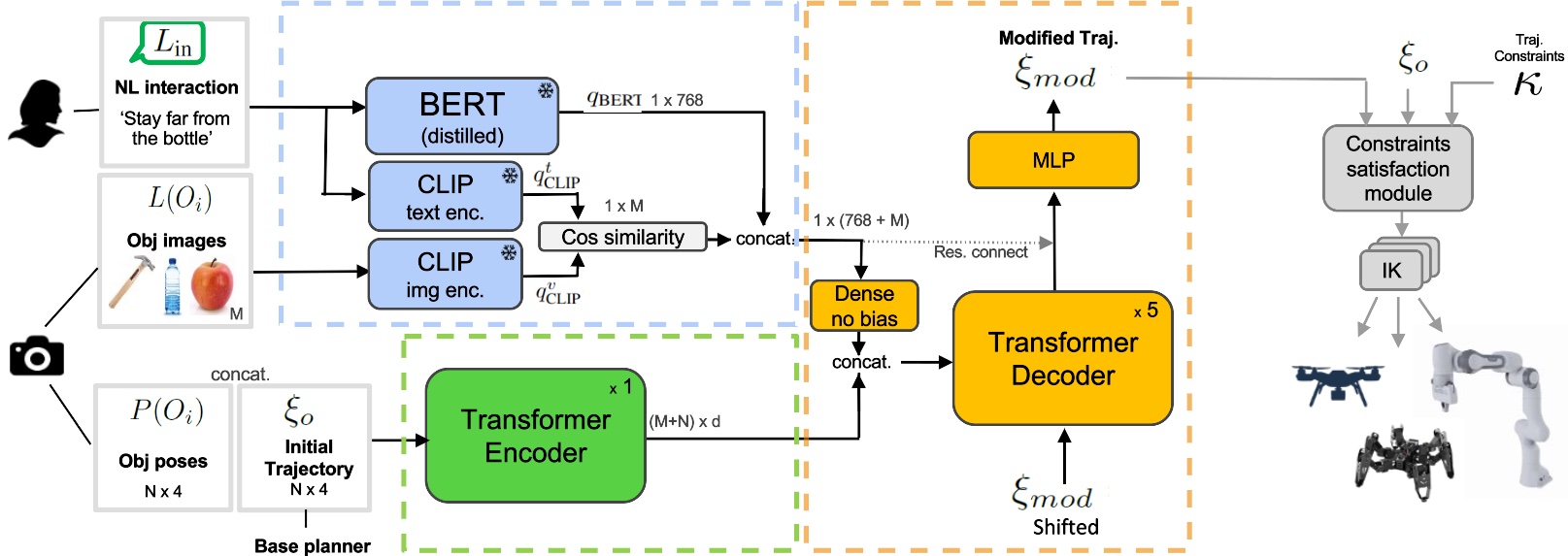

The goal of our work is to leverage information contained in existing vision-language pre-trained models to fill the gap in existing tools for human-robot interaction. Even though natural language is the richest form of communication between humans, modeling human-robot interactions using language is challenging because we often require vast amounts of data to train models, or classically, force the user to operate within a rigid set of instructions. To tackle these challenges, our framework makes use of two key ideas: first, we employ large pre-trained language models to provide rich user intent representations, and second, we align geometrical trajectory data with natural language jointly with the use of a multi-modal attention mechanism.

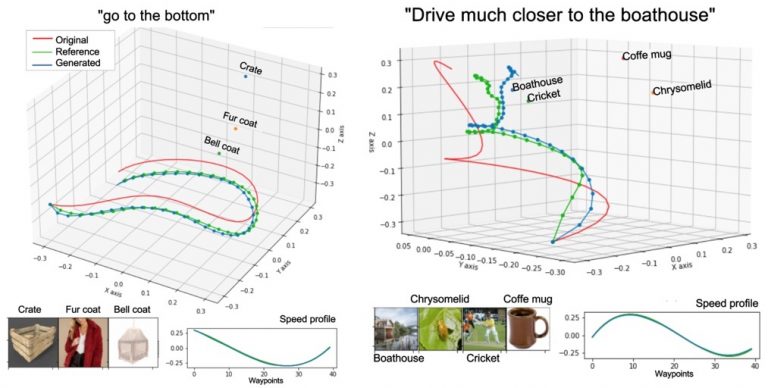

We test our model on multiple robotic platforms, from manipulators to drones, and show that its functionality is agnostic of the robot form factor, dynamics, and motion controller. Our goal is to enable a factory worker to quickly reconfigure a robot arm trajectory further away from fragile objects; or allow a drone pilot to command the drone to slow down when close to buildings – all without requiring immense technical expertise.

Combining language and geometry into a single robotics model

Our overall goal is to provide a flexible interface for human-robot interaction within the context of trajectory reshaping that is agnostic to robotic platforms. We assume that the robot’s behavior is expressed through a 3D trajectory over time, and that the user provides a natural language command to reshape its behavior which relates to particular things in the scene, such as the objects in the robot workspace. Our trajectory generation system outputs a sequence of waypoints in XYZ and velocities, which are calculated fusing scene geometry, scene images, and the user’s language input. The diagram below shows an overview of the system:

Examples with aerial vehicles:

Examples with a hexapod robot: